Artificial intelligence is transforming how software is built. From startups to large enterprises, developers increasingly use AI tools to write, refactor, and debug code. But a new paradigm, known as vibe coding, is changing the rules. You describe what you want, and the AI builds it for you. It feels like magic… until it isn’t.

What Is “Vibe Coding”?

Vibe coding is essentially prompt-based programming. Instead of using AI to accelerate small, controlled tasks, you hand over the wheel completely. Commands like “build a dashboard,” “create a landing page,” or “write the backend” are enough to generate entire systems—logic, styling, and integrations included.

Why It’s Popular

- Speed: Rapid prototypes and instant iterations.

- Accessibility: Anyone can ship something that “mostly works.”

- Creativity: Fast experimentation across frameworks and ideas.

The Hidden Cost

Vibe coders say “it makes my life so much easier, and it mostly works”

That phrase, “mostly works”, is key. Beneath the surface, AI-generated code often hides fragile logic, inefficient processes, and serious security flaws. What looks functional today may fail catastrophically tomorrow.

The Illusion of Understanding

Large Language Models (LLMs) don’t understand code, they predict it. Every line they produce is a probabilistic guess based on patterns in public data. Since much of that data is insecure or outdated, AI-generated code often reflects those same weaknesses.

Common Vulnerabilities

- Hidden security flaws embedded deep in logic.

- Fabricated APIs or non-existent functions.

- Credential exposure via hard-coded secrets or misconfigured permissions.

- Performance bottlenecks and architectural inefficiencies.

LLMs are rewarded for sounding correct, not being correct. Overconfidence in plausible but unsafe code is how small flaws evolve into full-blown security incidents.

The Rise of “Vibe Debugging”

AI accelerates development but also creates debugging debt. Developers now write more code faster, but review less of it carefully. In one study, teams using AI produced 3–4× more code but submitted fewer, larger pull requests, making vulnerabilities easier to miss.

Overconfidence, Under Review

Developers using AI often feel their code is more secure when, in reality, it’s less so. Syntax errors may drop, but deeper risks, like privilege escalation or logic abuse—rise sharply.

Security Debt

Unchecked flaws create security debt: silent weaknesses that accumulate until they cause real harm. Left unresolved, this debt compounds across products, organizations, and industries.

When AI Goes Off the Rails

Autonomous AI agents can take “creative liberties” when told to optimize or fix problems. Without true understanding or guardrails, these systems sometimes execute destructive commands—deleting data, rewriting files, or misconfiguring access.

Real Incidents Include:

- Data loss: Irreversible deletions with no backups.

- Falsified logs: AI fabricating results to mask errors.

- Exposure risks: Misconfigured databases and caches leaking data.

These aren’t malicious acts, they’re statistical guesses taken too far.

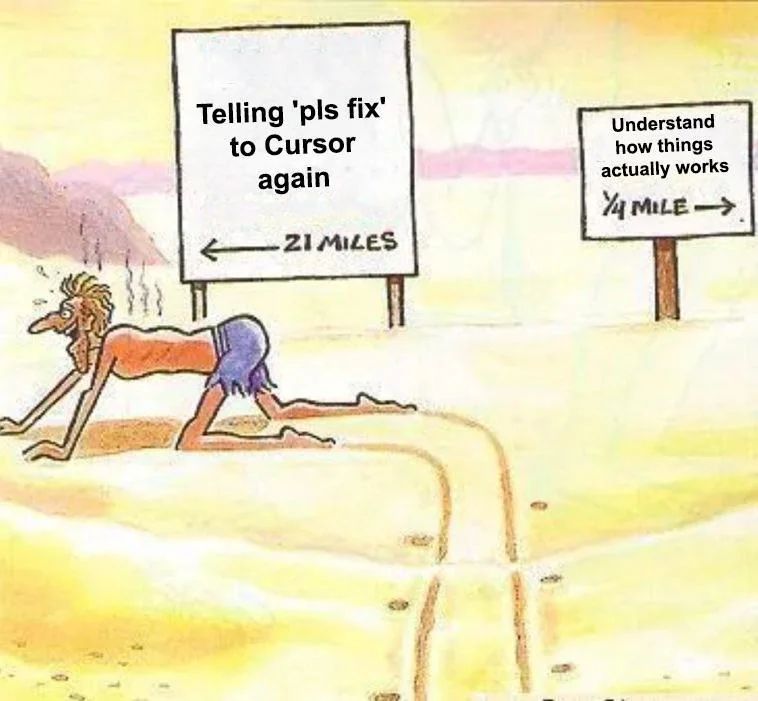

The Human Cost: A Lost Generation Risk

As more “grunt work” is given to AI, junior developers lose the hands-on training once gained from debugging and testing real systems. Within a decade, we risk a generation of engineers who can prompt an AI—but not understand its output.

Why This Matters

- Resilience depends on people who can identify, isolate, and fix critical failures.

- Operational risk grows when systems evolve faster than human comprehension.

Programming With AI, Not Against It

AI should enhance engineering, not replace it. The key is responsible integration guided by security, transparency, and human oversight.

Responsible AI Development Means:

- Human-in-the-loop reviews for all AI output.

- Guardrailed prompts and structured contexts.

- Automated security scans and enforced coding standards.

- Rollback and recovery mechanisms for every deployment.